Intel recently announced a new family of processors for enthusiasts, the Core X-series, and it’s anchored by the company’s first 18-core CPU, the i9-7980XE.

Priced at $1,999, the 7980XE is clearly not a chip you’ll see in an average desktop. Instead, it’s more of a statement from Intel. It beats out AMD’s 16-core Threadripper CPU, which was slated to be that company’s most powerful consumer processor for 2017. And it gives Intel yet another way to satisfy the demands of power-hungry users who might want to do things like play games in 4K while broadcasting them in HD over Twitch. And, as if its massive core count wasn’t enough, the i9-7980XE is also the first Intel consumer chip that packs in over a teraflop’s worth of computing power.

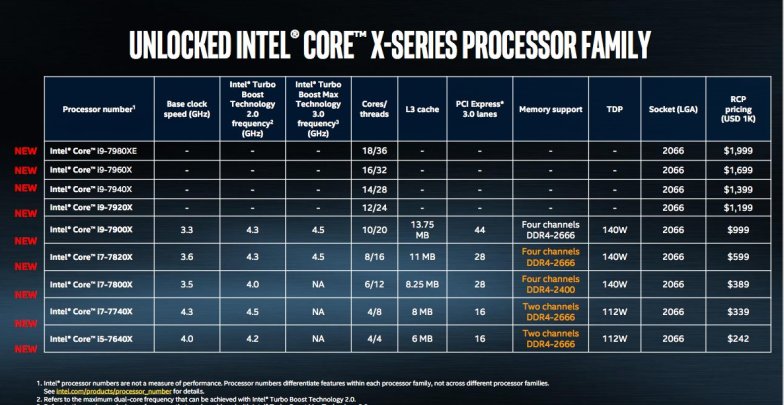

If 18 cores are overkill for you, Intel also has other Core i9 Extreme Edition chips in 10-, 12-, 14- and 16-core variants. Perhaps the best news for hardware geeks: The 10 Core i9-7900X will retail for $999, a significant discount from last year’s version.

All of the i9 chips feature base clock speeds of 3.3GHz, reaching up to 4.3GHz dual-core speeds with Turbo Boost 2.0 and 4.5GHz with Turbo Boost 3.0 a new version of Turbo Boost which Intel has upgraded. The company points out that while the additional cores on the Core X models will improve multitasking performance, the addition of technologies like Turbo Boost Max 3.0 ensures that each core is also able to achieve improved performance. (Intel claims that the Core X series reaches 10 percent faster multithread performance over the previous generation and 15 percent faster single thread.)

(via Engadget, The Verge)