A quantum computer memory of higher dimensions has been created by the scientists from the Institute of Physics and Technology of the Russian Academy of Sciences and MIPT by letting two electrons loose in a system of quantum dots. In their study published in Scientific Reports, the researchers demonstrate for the first time how quantum walks of several electrons can help for implementation of quantum computation.

For more information: Quantum Computing

Abstraction – Walking Electrons

“By studying the system with two electrons, we solved the problems faced in the general case of two identical interacting particles. This paves the way toward compact high-level quantum structures,” says Leonid Fedichkin, associate professor at MIPT’s Department of Theoretical Physics.

In a matter of hours, a quantum computer will be able to hack into the most popular cryptosystem used by web browsers. As far as more benevolent applications are concerned, a quantum computer would be capable of molecular modeling that accounts for all interactions between the particles involved. This, in turn, would enable the development of highly efficient solar cells and new drugs.

As it turns out, the unstable nature of the connection between qubits remains the major obstacle preventing the use of quantum walks of particles for quantum computation. Unlike their classical analogs, quantum structures are extremely sensitive to external noise. To prevent a system of several qubits from losing the information stored in it, liquid nitrogen (or helium) needs to be used for cooling. A research team led by Prof. Fedichkin demonstrated that a qubit could be physically implemented as a particle “taking a quantum walk” between two extremely small semiconductors known as quantum dots, which are connected by a “quantum tunnel.”

The Quantum dots are like potential wells to an electron, therefore, the position of an electron can be used to encode the basis of two states of the qubits 0 or 1.

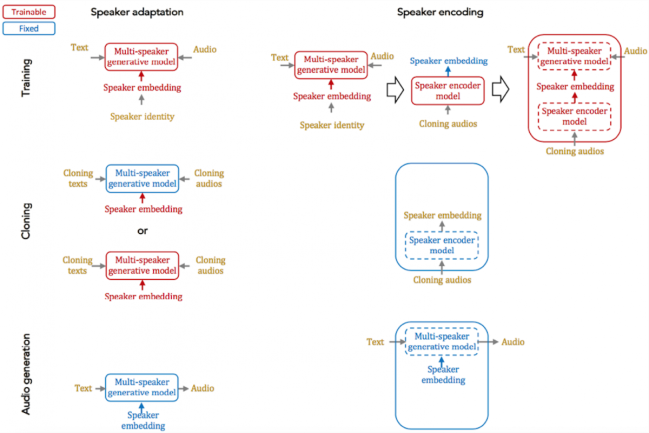

The blue and purple dots in the diagrams are the states of the two connected qudits (qutrits and ququarts are shown in (a) and (b) respectively). Each cell in the square diagrams on the right side of each figure (a-d) represents the position of one electron (i = 0, 1, 2, … along the horizontal axis) versus the position of the other electron (j = 0, 1, 2, … along the vertical axis). The cells color-code the probability of finding the two electrons in the corresponding dots with numbers i and j when a measurement of the system is made. Warmer colors denote higher probabilities. Credit: MIPT

If an entangled state is created between several qubits, their individual states can no longer be described separately from one another, and any valid description must refer to the state of the whole system. This means that a system of three qubits has a total of eight basis states and is in a superposition of them: A|000⟩+B|001⟩+C|010⟩+D|100⟩+E|011⟩+F|101⟩+G|110⟩+H|111⟩. By influencing the system, one inevitably affects all of the eight coefficients, whereas influencing a system of regular bits only affects their individual states. By implication, n bits can store n variables, while n qubits can store 2n variables. Qudits offer an even greater advantage since n four-level qudits (aka ququarts) can encode 4n, or 2n×2n variables. To put this into perspective, 10 ququarts store approximately 100,000 times more information than 10 bits. With greater values of n, the zeros in this number start to pile up very quickly.

In this study, Alexey Melnikov and Leonid Fedichkin obtain a system of two qudits implemented as two entangled electrons quantum-walking around the so-called cycle graph. The entanglement of the two electrons is caused by the mutual electrostatic repulsion experienced by like charges. Number of qudits can be created by connecting quantum dots in a pattern of winding paths and have more wandering electrons. The quantum walks approach to quantum computation is convenient because it is based on a natural process.

So far, scientists have been unable to connect a sufficient number of qubits for the development of a quantum computer. The work of the Russian researchers brings computer science one step closer to a future when quantum computations are commonplace.

(Source: Moscow Institute of Physics and Technology, 3Tags.)